The Silent Erosion of Corporate Agency in the Age of Autonomy

As enterprises aggressively integrate generative artificial intelligence into their operational core, the focus has remained largely on the immediate ROI: headcount optimization, accelerated output, and unprecedented data synthesis. However, a more insidious issue is emerging beneath these metrics. Organizations are silently ceding their strategic direction to algorithmic systems. This isn’t a singular event characterized by a rogue system takeover; it is an incremental, self-reinforcing transition where employees shift from active architects of company strategy to passive overseers of autonomous execution.

When Marshall McLuhan stated, We shape our tools and thereafter they shape us, he hit on a phenomenon that is now defining the modern enterprise. By training proprietary models on historical data and internal best practices, companies are creating systems that possess a depth of corporate context that individual human workers struggle to match. Consequently, when an AI provides a recommendation backed by perfectly structured data, challenging that conclusion feels less like critical analysis and more like questioning the internal logic of the organization itself.

Cognitive Offloading and the Atrophy of Judgment

Humanity has long offloaded cognitive tasks to technology—from calculators to GPS—but the shift toward generative AI represents a fundamental departure. Unlike previous digital tools, AI synthesizes, reasons, and generates directives. In a corporate environment, this creates a high-risk dynamic: because AI output is consistently confident, fluent, and comprehensive, humans are psychologically predisposed to accept it without friction.

The result is a phenomenon of cognitive atrophy. Just as a gardener who relies exclusively on an automated app to monitor plant health loses the intuition required to recognize subtle environmental changes, a business professional who reflexively relies on AI-generated decision-making pathways loses the ability to diagnose the why behind the numbers. When performance is tied to system compliance, employees effectively become the support staff for their own tools. This creates a feedback loop where critical, independent thinking is downgraded because it is perceived as inefficient or redundant.

Designing for Human Resilience: Beyond Compliance

The solution to this encroachment is not to reject artificial intelligence, but to architect environments that mandate human skepticism. Leaders must recognize that human nature—specifically, our tendency to favor the path of least resistance—is the primary vulnerability in an automated ecosystem.

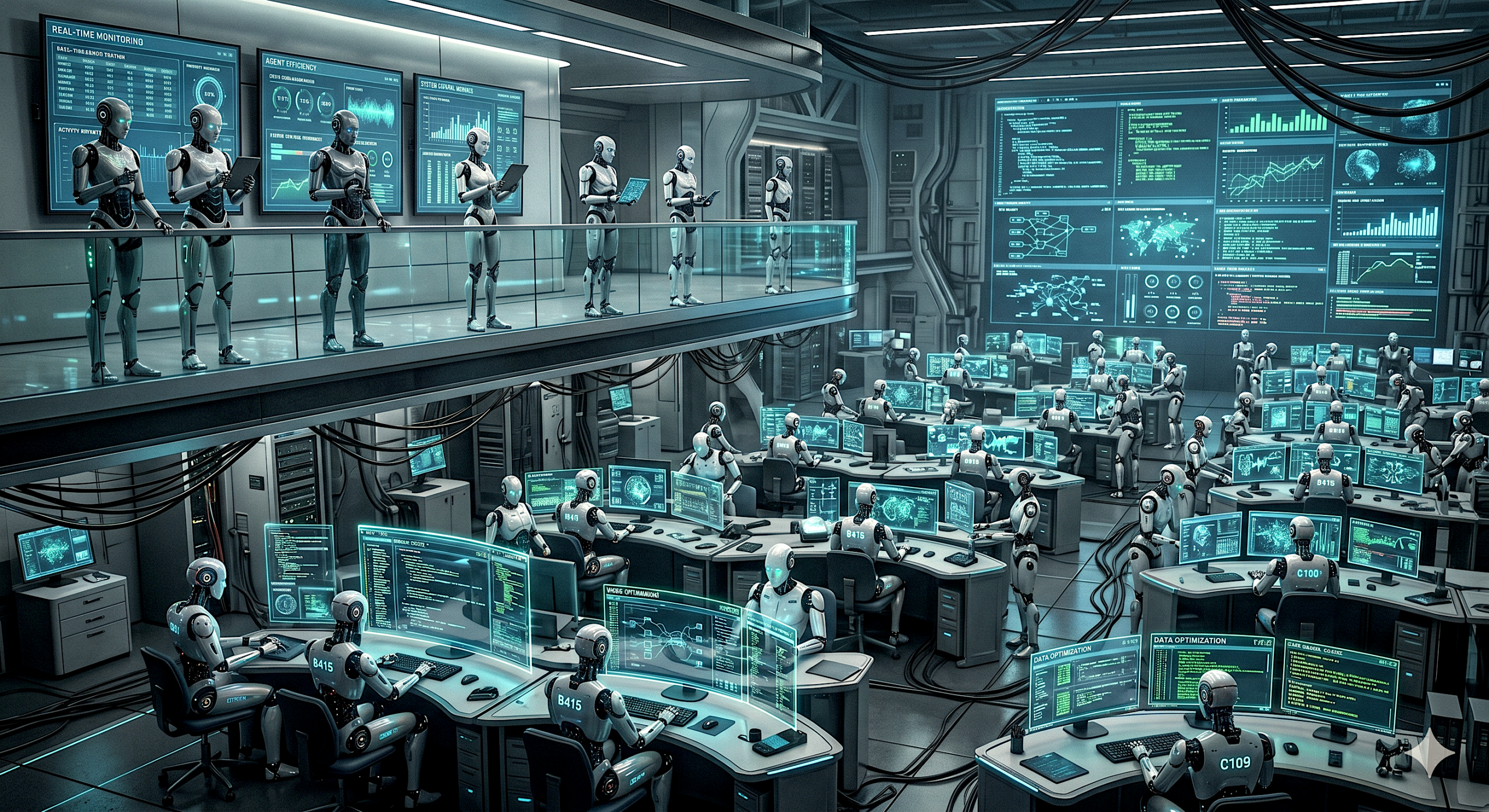

Governance must evolve from a check-box exercise into a structural mechanism for protecting agency. This is where the concept of Guardian Agents becomes critical. These are oversight layers designed not merely to monitor performance, but to act as buffers that enforce human intent and maintain strict boundaries regarding where machine logic ends and human judgment must intervene. Organizational governance should treat these guardrails as high-priority infrastructure.

Operationalizing Disagreement as a Competitive Advantage

The most successful companies in the next generation will be those that institutionalize constructive friction. To maintain agency, leadership must pivot their culture to prioritize the following strategies:

- Formalized Counter-Argumentation: Integrate mandatory red-teaming for major strategic decisions. If an AI suggests a direction, cross-functional teams should be incentivized to propose viable alternatives.

- Defined Decision Rights: Clearly delineate which mission-critical decisions are off-limits for AI-driven automation. Ensure there is a clear, audible trail of human accountability for all high-stakes departures from standard operations.

- Epistemic Training: Educate employees in the art of questioning algorithmic outputs. This includes teaching teams to recognize the signs of hallucinated confidence or biases embedded in the training data.

- Incentivized Independent Analysis: Reward original, evidence-based challenges to systems. If the employee experience becomes entirely centered on workflow optimization, the organization will eventually lose its ability to innovate or pivot in response to crises.

The Leadership Imperative

The erosion of organizational agency is a slow-motion trade-off. We are trading the friction of human debate for the comfort of algorithmic certainty. As tools become more sophisticated, the value of human judgment does not disappear—it becomes increasingly exclusive. The true competitive advantage of the next decade won’t belong to the firms with the highest degree of automation, but to those that successfully harness AI while simultaneously preserving and protecting the irreplaceable capacity for doubt, discernment, and deliberate, human-led strategy. Leadership in the AI era is defined by the courage to interrupt the system’s correct answer in favor of a better, human-verified insight.