The Shift to AI-Native Customer Experience

Amazon Ring’s recent transition to fully automated voice support signals a broader structural pivot in the customer service industry. By routing 100% of its inbound telephone traffic through the startup Vapi, Ring has effectively bypassed the traditional limitations of human-staffed call centers. This move serves as a bellwether for the enterprise sector, illustrating how large-scale firms are transitioning from experimental AI pilots to mission-critical infrastructure.

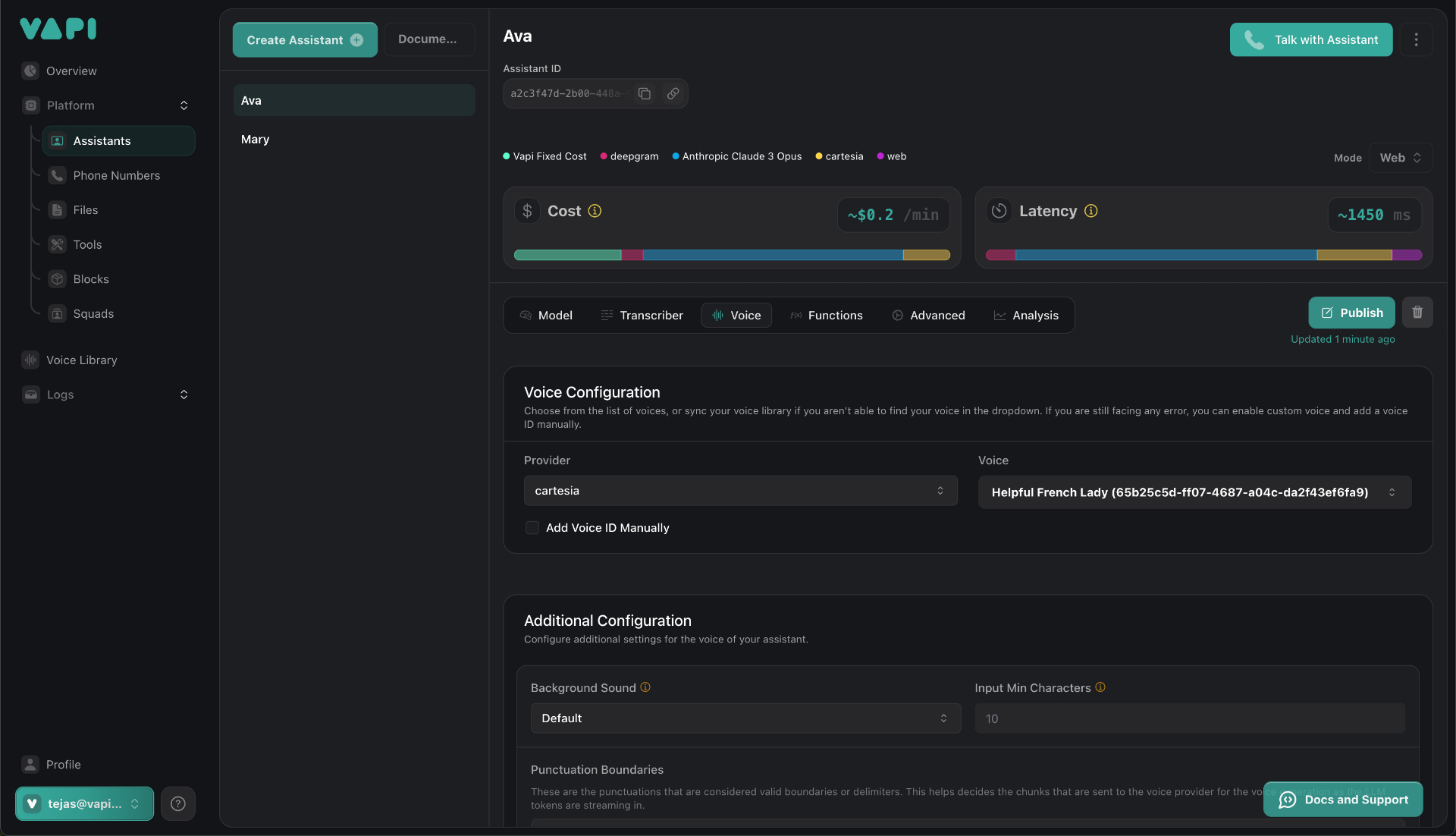

The decision to choose Vapi over 40 other vendors was driven by a requirement for low-latency performance and operational autonomy. According to Jason Mitura, Ring’s VP of software development, the primary benefit has been the ability for non-engineering teams to calibrate the AI’s behavior. This functional modularity is becoming a prerequisite as companies look to reduce their dependence on technical cohorts for fine-tuning customer experiences.

Vapi’s Rapid Valuation and Strategic Positioning

The successful deployment at Ring played a pivotal role in Vapi securing a $50 million Series B funding round, valuing the company at approximately $500 million. Led by Peak XV Partners, this capital injection underscores investor sentiment regarding the infrastructure layer of generative AI.

Unlike competitors that focus on rigid, vertical-specific SaaS solutions, Vapi has positioned itself as the underlying orchestration engine for voice agents. Having pivoted from a niche AI-therapy project, founders Dearsley and Nikhil Gupta recognized that developers prioritized infrastructure performance—specifically latency—over monolithic app wrappers. This bottom-up adoption strategy, fueled by over a million developers using their self-serve platform, provided the necessary stress-testing to satisfy the rigorous compliance and reliability demands of enterprise clients like Intuit, New York Life, and UnityAI.

Taming the Generative AI Beast

The current landscape for conversational AI is undergoing intense consolidation. Vapi finds itself in a crowded market alongside peers like Sierra, Decagon, and PolyAI, all vying to minimize human intervention in customer life cycles. However, Vapi’s competitive edge lies in its philosophy of taming the indeterminacy of large language models.

For modern enterprises, the primary barrier to AI adoption has not been the lack of conversational capability, but the lack of predictable control. Vapi’s focus on the orchestration layer allows companies to enforce consistency in voice interactions, ensuring that customer-facing agents adhere to strict brand guidelines and regulatory nuances.

Industry Implications for 2025

The scale of Vapi’s operation, which processes between 1 million and 5 million calls daily, suggests that we have exited the era of novelty voice AI. As usage accelerates across the enterprise, the industry is shifting its focus toward:

- Democratized Configuration: Reducing the engineering bottleneck by empowering operations teams to tune AI behaviors.

- Infrastructure-First Contracting: Prioritizing vendors who provide the orchestration framework rather than fixed, packaged applications.

- High-Volume Reliability: Moving beyond chatty chatbots to robust systems capable of managing complex, transactional, and high-stakes voice interactions.

With its new funding, Vapi is now poised to scale its engineering and go-to-market teams. As the firm refines its ability to sanitize the output of generative models for corporate use, it is effectively setting the standard for how global enterprises will manage their voice-based service channels in the coming decade.