The Rise of Agentic Security: Microsoft’s MDASH Architecture

Microsoft has signaled a fundamental shift in vulnerability research by unveiling MDASH, the Multimodel Agentic Scanning Harness. Unlike traditional static analysis tools that rely on pattern matching, MDASH leverages an ensemble of AI agents to conduct deep, context-aware analysis. This development represents a maturing of the AI-driven cybersecurity landscape—moving away from generative coding assistants toward autonomous systems capable of finding, debating, and proving complex architectural flaws.

The platform recently demonstrated its efficacy by uncovering 16 previously unknown vulnerabilities in core Windows networking and authentication stacks. Four of these findings were classified as critical, remote code execution (RCE) flaws, forcing priority patches in the latest release.

Dissecting Complex Vulnerabilities

The significance of the vulnerabilities discovered by MDASH lies in their technical depth. These were not garden-variety buffer overflows reachable by basic automated scanners. Instead, the system identified sophisticated memory management bugs, such as a use-after-free in `tcpip.sys` and a double-free flaw in the IKEv2 service.

Because these defects spanned multiple source files and required specific, concurrent state conditions to trigger, they essentially functioned as invisible threats to legacy scanners. MDASH’s success in surfacing these indicates that agentic workflows are finally gaining the reasoning capabilities required to map interprocess communication (IPC) boundaries and kernel-mode lock invariants—areas where human researchers and static analysis tools have historically struggled.

The Shift from Models to Systems

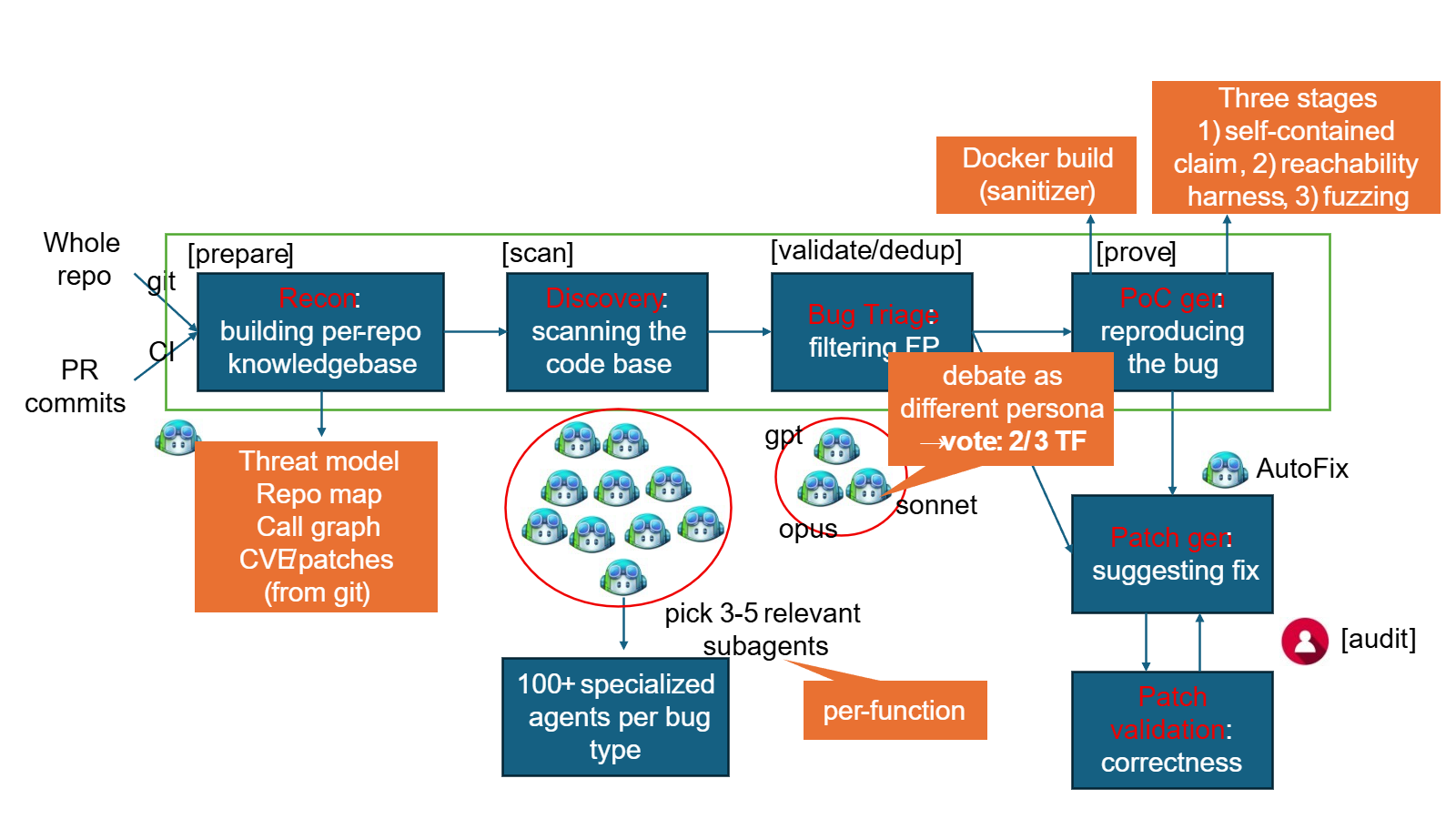

A critical insight from this announcement is the move away from monolithic model performance toward agentic orchestration. The MDASH architecture does not rely on a single master model. Instead, it utilizes a heterogeneous pipeline:

Frontier models handle high-level logic and complex reasoning.

Distilled models perform high-volume, cost-effective scans.

* Specialized agents act as debaters to challenge potential findings, effectively eliminating the high false-positive rates that have historically plagued AI security tools.

This multi-stage approach—comprising preparation, scanning, validation, deduplication, and formal proof—mimics a collaborative human security team. By incorporating domain-specific plugins that inject knowledge of Windows kernel conventions, the system bypasses the hallucination problems typical of foundation models tasked with technical, zero-day research.

Implications for the Industry

Microsoft’s progress with MDASH—led by alumni of the DARPA AI Cyber Challenge—suggests that autonomous cyber-reasoning is moving rapidly from academic proof-of-concept into production workflows. With a 100% recall rate on `tcpip.sys` benchmarks, the system is demonstrably outperforming conventional approaches in finding bugs that remain buried in legacy codebases.

For the security industry, this indicates a tightening of the window between code deployment and exploitability. As enterprises integrate these agentic systems into the CI/CD pipeline, we should expect a paradigm shift where security-by-design is automatically verified by AI agents before a single line of binary reaches the end user. However, this also forces a reality check on the offensive side; if these tools are being standardized within large vendors, threat actors are undoubtedly racing to develop, or bypass, equivalent agentic discovery frameworks.