The Strategic Realignment of Cloud Infrastructure

Amazon Web Services (AWS) has secured a significant multiyear commitment from Meta Platforms, marking a pivot in how major hyperscalers address the ballooning demands of generative AI. By transitioning substantial CPU-intensive workloads to AWS’s proprietary Graviton infrastructure, Meta is moving beyond generic x86 architectures in favor of custom-designed silicon tailored for massive-scale efficiency.

Reports indicate the deal is valued in the billions, focusing on the procurement of tens of millions of Graviton cores. This partnership represents a shift toward vertical integration, where cloud providers and AI developers collaborate to optimize the entire hardware stack from the chip level upward.

The Technical Edge of Graviton5

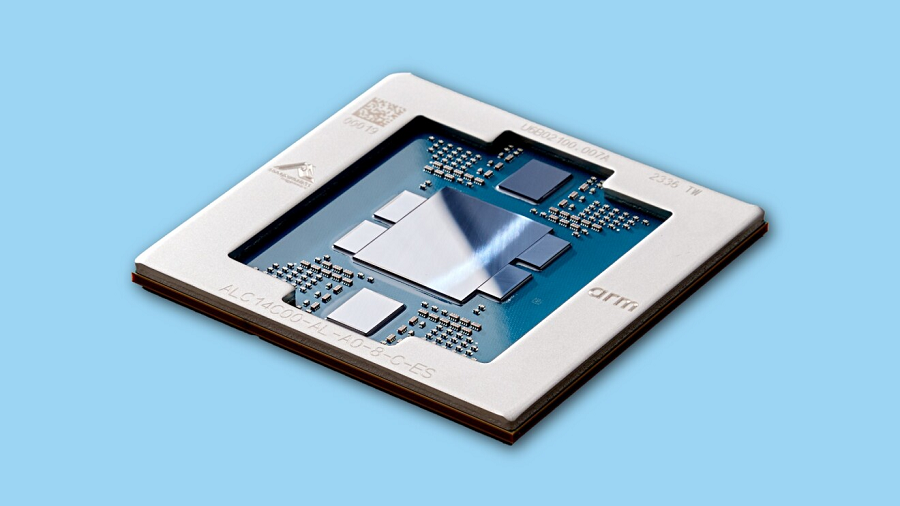

At the heart of this deployment is the Graviton5, AWS’s latest iteration of its internally developed server processors. Built on a sophisticated three-nanometer process node, the chip integrates 192 cores leveraging the Arm instruction set architecture (ISA).

The architectural improvements in Graviton5 are not merely incremental. By expanding the L3 cache by a factor of five, AWS has addressed the memory wall—the latency bottleneck that occurs when processors wait for data to transfer from distant memory modules. Furthermore, the inclusion of dedicated matrix and vector extensions within the Arm ISA allows these CPUs to handle AI-specific mathematical operations far more effectively than legacy chips.

Architectural Efficiency via the Nitro System

Meta’s decision to commit to this infrastructure is largely driven by the operational advantages of the AWS Nitro System. In traditional cloud environments, hypervisors consume significant computing overhead to manage networking, storage, and security.

Nitro offloads these management tasks to specialized hardware accelerators. For Meta, this means a higher percentage of the compute die is available for AI agents and application logic rather than housekeeping chores. Additionally, the integrated Nitro Isolation Engine provides a hardware-level security layer, ensuring that Meta’s vast, multi-tenant workloads remain protected within the shared public cloud environment.

Implications: A Divergent Infrastructure Strategy

This deal signals a broader trend in industry infrastructure: the move toward heterogeneous computing. Meta’s strategy is clearly defined by a desire to avoid vendor lock-in with traditional silicon giants. By balancing its portfolio with both AWS Graviton cores and Arm’s AGI processors, Meta is creating a versatile compute foundation.

The ability to influence the roadmap of future silicon iterations suggests that Meta is no longer content to build software atop off-the-shelf hardware. Instead, the company is positioning itself as a co-architect of the data center. By partnering with AWS to run agentic AI—the next generation of autonomous AI workflow software—Meta is betting that custom, energy-efficient silicon will be the primary driver of profitability in the high-stakes AI race.

This shift has profound implications for the semiconductor market. As Meta and other hyperscalers move in-house or toward specialized custom architectures, the demand for traditional, general-purpose processors is likely to decouple from the growth of AI compute requirements. AWS and Meta’s collaboration confirms that the future of the cloud is being written in silicon designed specifically for the era of autonomous, agent-based AI.