Cerebras Systems IPO Pivot Signals Massive Investor Appetite for Inference-Focused Silicon

Cerebras Systems is reportedly recalibrating its public offering strategy, aiming to capitalize on intense institutional appetite. According to recent reports, the Sunnyvale-based AI hardware firm is considering a valuation hike that would see its share price range shift from an initial $115–$125 bracket to $150–$160. By potentially expanding the offering size to 30 million shares, Cerebras is positioning itself to raise approximately $4.8 billion—a significant jump from its initial $3.5 billion projection.

With reports indicating that the IPO is oversubscribed by a factor of 20, the market is signaling a clear shift in sentiment: investors are no longer content with just backing foundational model builders; they are hunting for the specialized compute infrastructure required to power the transition from training to production.

Architectural Differentiation: Moving Beyond the GPU Paradigm

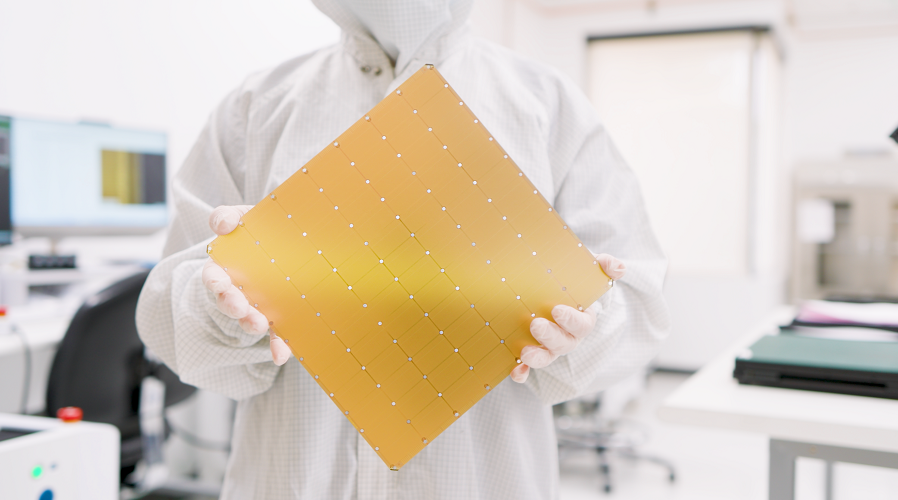

The core of the Cerebras value proposition lies in its divergence from standard graphics processing unit (GPU) architectures. While Nvidia has dominated the market with its Blackwell B200 units, Cerebras has staked its reputation on the Wafer-Scale Engine (WSE-3), a processor that functions as a single, massive silicon entity rather than a cluster of smaller chips.

The technical edge here is the integration of a 44-gigabyte pool of high-speed SRAM located directly on the processor. By placing memory in such tight proximity to the compute cores, Cerebras achieves single-clock-cycle latency. This is a game-changer for inference—the computationally intensive task of running real-time AI responses. Because the data does not need to traverse a bottlenecked bus to reach the memory, the latency reduction is measurable at an industrial scale, directly addressing the compute inefficiencies currently plaguing large-scale AI deployment.

The Shift Toward Production-Grade AI

Cerebras’s rapid financial turnaround highlights an industry-wide pivot. After a $485 million loss in 2023, the company reported a net income of $87.9 million in 2025 on $290.3 million in revenue—a 76% increase. This profitability suggests that the training phase of the AI gold rush is shifting toward the inference phase.

Data centers are increasingly prioritizing throughput for concurrent user queries over the massive batch-parallelism required for initial model training. By optimizing its CS-3 systems—the 1.8-ton appliances housing its wafers—specifically for these inference workloads, Cerebras has effectively diversified its client base. The inclusion of heavyweights like OpenAI and Amazon Web Services indicates that these infrastructure builders are actively seeking alternatives to the standard GPU supply chain.

From Regulatory Hurdles to Market Dominance

The road to this public listing has been far from linear. A prior attempt to go public was derailed by concerns surrounding a heavy revenue dependency on G42, an Abu Dhabi-based firm. While the subsequent national security review added a layer of geopolitical complexity, it ultimately provided a clean bill of health from the U.S. government.

This period of scrutiny ironically forced Cerebras to mature its business model. By onboarding institutional titans, the company has successfully de-risked its revenue stream and validated its technology stack against the most rigorous standards in the industry.

Market Implications

As Cerebras prepares for its debut on the Nasdaq under the ticker CBRS, the company stands as a bellwether for the next stage of the AI hardware market. If the current IPO enthusiasm translates into a successful market launch, it will demonstrate that specialized, wafer-scale silicon is a commercially viable competitor to general-purpose GPU architectures.

With Morgan Stanley, Citigroup, Barclays, and UBS acting as the architects of this offering, the financial sector is betting heavily that the demand for inference-specific compute will persist long after the current hype cycle, marking the entry of a formidable new player into the enterprise AI infrastructure ecosystem.