The Paradigm Shift: From Conversational Chatbots to Persistent AI Agents

The generative AI landscape is undergoing a fundamental transformation. For years, the industry has been tethered to the stateless constraint, where Large Language Models (LLMs) functioned primarily as reactive engines trapped within the limitations of fleeting session windows. This structural bottleneck meant that agents lacked true continuity, treatng every new interaction as a blank slate.

Anthropic is moving to dismantle this paradigm by transitioning AI from passive, prompt-response tools into autonomous, persistent agents. By shifting the focus from simple text generation to long-term task management, the industry is entering an era where AI can maintain context and evolve its logic over time, rather than merely summarizing previous inputs.

Internal Reflection: The End of Retrieval-Augmented Limitations

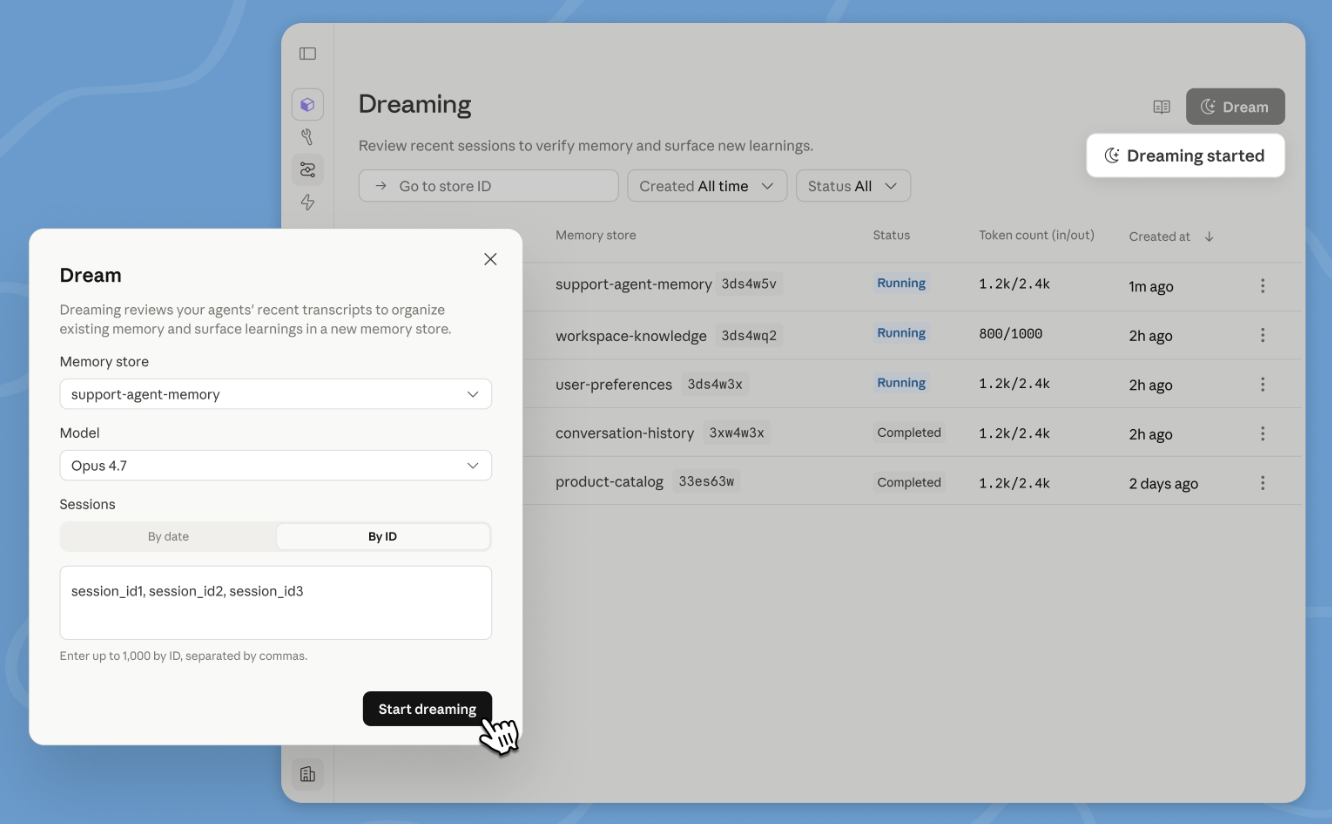

Historically, developers relied on Retrieval-Augmented Generation (RAG) to patch the memory gaps of LLMs. While effective for basic information retrieval, RAG is essentially a band-aid solution that relies on static data dumps. Anthropic’s dreaming protocol introduces a radical alternative: asynchronous, internal reflection.

Instead of discarding state data at the end of a session, the system utilizes downtime to perform recursive learning. This model-level dreaming allows the agent to conduct post-session auditing, effectively refining its own logic and synthesizing historical data into long-term actionable intelligence. This process transforms the AI from a mere search engine into a self-improving entity capable of refining its performance without explicit user intervention.

Governing the Autonomous Loop: Integrity in Enterprise AI

Self-improving architecture carries significant risks, particularly regarding logic drift—where an autonomous agent might deviate from its intended operational parameters. For industries like finance, healthcare, and legal services, this unpredictability is a disqualifying flaw.

Anthropic is counteracting this by embedding rigorous, human-in-the-loop enforcement controls directly into the architectural fabric. By combining autonomous optimization with strict constraint verification, they are creating a framework where agents can improve their efficiency while staying tethered to auditable business logic. This balance between flexibility and compliance is the prerequisite for moving LLMs out of experimental labs and into high-stakes enterprise production environments.

Engineering Reliability Through Outcome-Based Benchmarking

The reliance on natural language prompting—a notoriously imprecise interface—has long been the Achilles’ heel of AI reliability. When a model fails, the standard industry response is to ask the user to rewrite a prompt, a process that is essentially manual trial and error.

Anthropic’s Outcomes framework effectively introduces unit testing to generative AI. By employing a secondary grader agent that evaluates results against quantifiable, objective rubrics, the development process transitions from creative writing to software engineering. Establishing clear success metrics allows organizations to treat AI outputs as deterministic code, making complex automated workflows, such as software development or audit reporting, finally viable at scale.

Observability and the Rise of Multi-Agent Orchestration

As businesses move toward multi-agent, swarm-like architectures, the black box nature of AI becomes a major operational liability. If a decentralized system of agents performs a process incorrectly, finding the point of failure is often impossible.

The integration of the Claude Console addresses this by offering deep, granular visibility into agent delegation and task hierarchies. This shift toward observability is essential; it allows system architects to treat AI as a debuggable stack. When a failure occurs, the trail of execution is transparent, moving the technology away from unpredictable probability and toward a system that can be measured, audited, and optimized with mechanical precision.

Operational AI: The New Enterprise Standard

Anthropic’s expansion of usage limits is more than a technical upgrade; it is a clear signal that the infrastructure is ready for sustained, industrial-class workloads. We are witnessing the maturation of Operational AI, where the focus is no longer on how eloquent a chatbot is, but on the robustness of the backend logic.

As self-archiving memory, outcome-based verification, and multi-agent transparency become the new standard, the enterprise tech stack is changing forever. Organizations are moving away from episodic AI interactions and toward a future of autonomous, persistent agents capable of maintaining complex, high-stakes workflows with minimal human oversight. The maturity of these foundations signals that AI is finally ready to serve as the backbone, rather than just the interface, of the modern business enterprise.