The Strategic Evolution of GPT-5.5 Instant: Moving Beyond the Hype Cycle

OpenAI’s decision to bypass the anticipated GPT-5.4 iteration in favor of GPT-5.5 Instant is more than a branding adjustment or a leap over minor versions; it represents a fundamental departure from the era of big model spectacle. By prioritizing this specific release, the organization is acknowledging that the market for Large Language Models (LLMs) has reached a maturation point. The industry is no longer clamoring for broad, generalized intelligence; it is demanding operational consistency, architectural stability, and verifiable utility.

This shift signifies a transition away from the race for parameter supremacy toward a focus on architectural hardening. For OpenAI, the objective is to reposition its platform as a core component of enterprise infrastructure, where the margin for error is near zero.

Tackling the Hallucination Deficit in High-Stakes Verticals

The primary roadblock to the enterprise-wide adoption of generative AI has long been the persistence of hallucinations—the model’s propensity to prioritize syntactic fluency over factual integrity. In sectors like legal compliance, diagnostic medicine, and quantitative finance, a creative falsehood is a liability, not a feature.

Recent telemetry suggests that the Instant architecture is successfully moving the needle. The improvement in HealthBench validation scores—from 32.9 to 38.4—serves as a critical performance benchmark. When coupled with a documented 37.3% reduction in factual error rates, it becomes clear that OpenAI has reprioritized its Reinforcement Learning from Human Feedback (RLHF) protocols. Developers are no longer optimizing solely for conversational engagement; they are training the model to prioritize factual accuracy as the primary success metric. This data-driven pivot provides the quantifiable reliability required by institutional buyers to justify enterprise-wide integration.

The Professionalization of Model Persona

Historically, the consumer-facing interface of ChatGPT suffered from conversational bloat. The tendency of the model to utilize emojis, repetitive polite filler, and overly verbose explanations made it ill-suited for high-density analytical workflows.

The aesthetic and functional refinement seen in GPT-5.5 Instant addresses this by stripping away stylistic noise. By favoring brevity, precision, and high signal-to-noise ratios, OpenAI is essentially signaling that it views the model as an analytical asset rather than a digital companion. In professional environments where time is a fiscal metric, a model that communicates in blunt, reliable, and concise terms is far more valuable than one that mimics human rapport.

Data Governance and the Agentic Memory Paradox

The implementation of personal knowledge agents—which allow models to query private archives like emails and local documents—introduces a complex tension between productivity and security. While this enables the agentic capabilities users now expect, it creates significant friction for risk-averse IT departments.

OpenAI’s response has been to integrate context-tagging and granular data controls. This is a deliberate defensive strategy designed to reassure enterprise stakeholders. By providing visual provenance for the information a model cites, OpenAI is establishing a framework of accountability. However, the true test for adoption remains the ability to toggle these features, ensuring that proprietary corporate intelligence remains siloed while still benefiting from AI-assisted synthesis.

The Operational Reality: Managing Ephemeral Infrastructures

The introduction of the Instant tier alongside the Thinking and Pro tiers marks a sophisticated strategy for managing compute overhead. However, this level of product segmentation brings a new operational burden to the enterprise: the end of the static model.

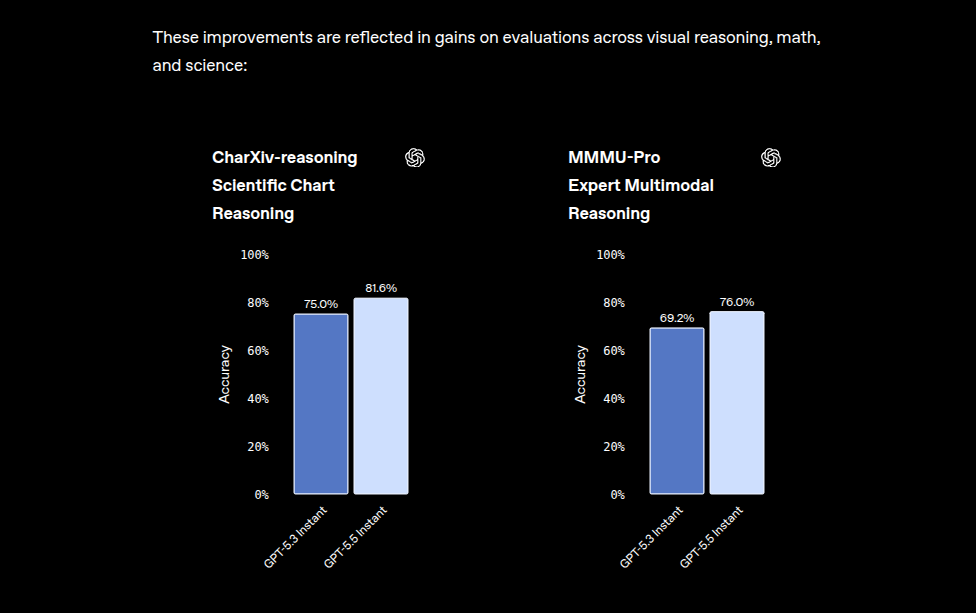

The rapid deprecation of GPT-5.3 demonstrates that model behavior is no longer predictable over long time horizons. For businesses, this creates a requirement for a revalidation loop. Organizations cannot simply deploy an LLM-based tool and expect it to behave identically in six months. Any company integrating these systems into a production workflow must now adopt rigorous, iterative validation protocols.

OpenAI’s long-term success will ultimately hinge on its ability to provide a stable API foundation that businesses can treat as reliable infrastructure. If OpenAI can balance its rapid, iterative deployment cycles with the stability requirements of regulated industries, it will solidify its position as the bedrock of the modern enterprise tech stack. If it fails, organizations may be forced to look toward smaller, self-hosted, or stagnant models that offer lower performance but greater predictability.