Meta’s Strategic Pivot: Why the Acquisition of Assured Robot Intelligence Matters

Meta Platforms Inc. has formally acquired San Diego-based startup Assured Robot Intelligence Inc., a stealthy innovator in the robotics software space. While the financial details of the transaction remain undisclosed, the move signals a calculated expansion of Meta’s footprint in the physical AI sector. By integrating this specialized team into its Meta Superintelligence Labs, the parent company of Facebook is clearly attempting to bridge the gap between generative language models and embodied physical intelligence.

The Pedigree Behind the Acquisition

The value of this acquisition lies less in existing patents and more in the specific expertise of its founders, Lerrel Pinto and Xiaolong Wang. Pinto is a seasoned professional in the space, having co-founded Fauna Robotics—a startup snapped up by Amazon earlier this year. His presence suggests a deep knowledge of the commercialization hurdles that often derail robotics projects before they reach market scale.

Xiaolong Wang, an associate professor at UC San Diego and a former Nvidia researcher, brings a sophisticated academic edge. His recent work on remote robotic teleoperation—specifically research enabling users to map a robot’s camera feed to a virtual reality interface—aligns perfectly with Meta’s long-term hardware roadmap.

Closing the Loop: Smart Glasses and Remote Intuition

Meta’s interest in Assured Robot Intelligence is likely a strategic play to leverage its existing interface hardware, namely the Meta Ray-Ban smart glasses and the attendant Neural Band gesture controller.

By integrating robot control systems with its wearable ecosystem, Meta is positioned to pioneer human-in-the-loop robotics. If a user can view a robot’s perspective via a heads-up display and manipulate its appendages via subtle wrist gestures, Meta effectively transforms its consumer eyewear into a high-utility remote operations platform. This isn’t just about building a product; it is about building the interface layer for an entire generation of physical AI.

The Qualcomm Model: Meta’s Blueprint for Robotics

Reports suggest Meta has no intention of becoming a full-stack hardware manufacturer for humanoid robots. Instead, they are positioning themselves as the Qualcomm of Robotics. By developing foundational software architectures and power-efficient silicon—such as the recent MIA500 inference accelerator—Meta aims to set the industry standard for how these machines operate.

If Meta provides the brain (the AI models) and the nerves (the control interfaces) to external robotics manufacturers, they can collect a platform tax or royalty on every humanoid device deployed globally. This is a far more scalable business model than attempting to handle the high capital expenditures and logistical chains associated with the consumer robotics manufacturing sector.

Advancing Toward Self-Learning Machines

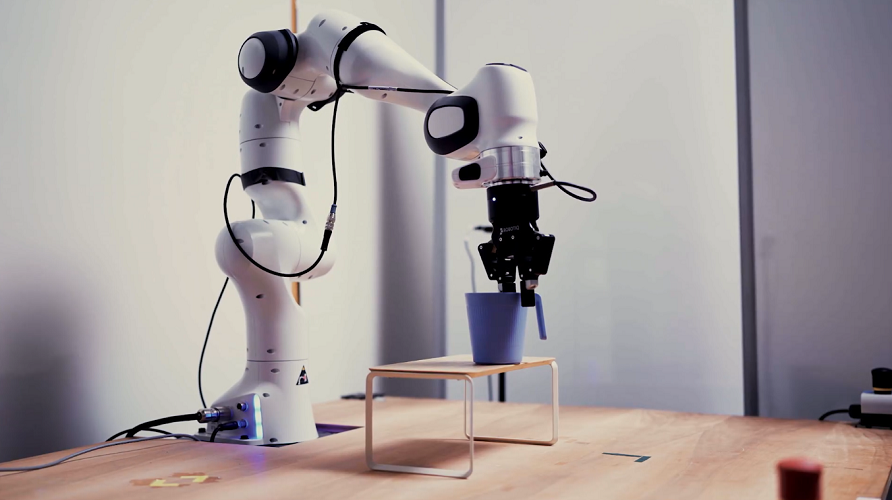

The collaboration will focus heavily on advancing self-learning techniques. Traditional robotics relies heavily on hard-coded instruction sets, which are rigid and prone to failure in unpredictable environments. By tasking the Assured Robot Intelligence team with training models via trial and error, Meta is aiming for general-purpose dexterity—the ability for a robot to walk into an unfamiliar room and figure out how to manipulate its surroundings on its own.

With the resources of the Meta Robotics Studio backing them, this team is no longer constrained by the funding cycles of a small startup. They possess the compute infrastructure and the vast datasets necessary to push AI beyond the screen and into the physical realm. The integration of self-learning models with Meta’s existing large language models could lead to the first true embodied AI assistants capable of understanding complex, multi-modal human language while physically interacting with the real world.